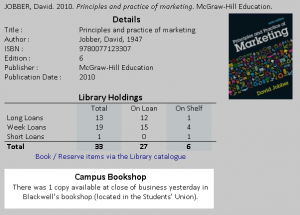

Integration with the campus bookshop

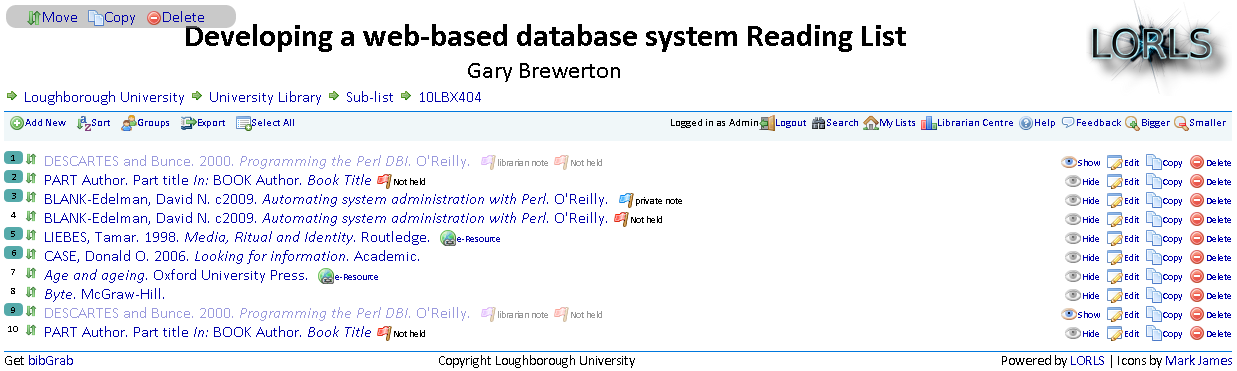

New bulk functions and flags

Having gone live over a month ago there has been quite a few new features added and old features tweaked. The two biggest new features are bulk functions and flags.

Bulk Functions

Bulk functions help editors of large lists who want to move/reorder/copy/delete multiple items.

To select items the user simply clicks on the items rank number which is then highlighted to show which items are selected. When any items are selected the bulk functions menu appears at the top left. There are currently three bulk functions

- Move

- Moves the selected items to a point specified in the list. The items being moved can also be sorted at the same time.

- Copy

- Copies the selected items to the end of the specified reading lists.

- Delete

- Deletes the selected items.

Flags

Another new feature is the inclusion of flags for certain situations.

- Private Note

- If an item has one or more private notes attached to it and the user has permissions to access them, then this flag is shown. If the user hovers the cursor over the flag then they get to see the private notes without having to edit the item.

- Librarian Note

- If an item has one or more librarian notes attached to it and the user has permissions to access them, then this flag is shown. If the user hovers the cursor over the flag then they get to see the librarian notes without having to edit the item.

- Not Held

- This flag is a little more complicated than the previous ones. If the user is able to see the library only data for the item and and item is a book or journal and it’s marked as not being held by the library and it doesn’t have a URL data element and is not marked as “Will not purchase” then this flag is shown.

Or to put it another way it highlights to librarians the items on a list that they may want to investigate buying.

And we have a launch!

At approximately 11:40 this morning we launched LORLS v6 to students and academics at Loughborough. This was done using our standard importer which extracted data from our previous LORLS 5 installation. We then ran a number of local modification scripts (e.g. to remove years and alter the metadata layout).

This seems like an opportune moment to say a few thank yous to those who contributed to the development and implementation of this new version:

- Jon and Jason, who in the best traditions of a pantomime horse developed the back and front ends

- Ginny and Jenny, for producing promotional and training material

- Theresa, Vicky, Karen, Lynne and Sue, for testing and critiquing the new system

- Sue 2.0, for putting up with us during the database design

warprocess - And to all the other library staff, academics and students who provided invaluable input and support for this new version.

And finally as it is Valentine’s Day a little (and I do mean little) poetry:

Roses are red, violets are blue,

LORLS 6 is here, just for you!

T minus 7 days (and counting…)

We are now just one week away from the new version of our reading lists system going into production use here at Loughborough. As part of the build up to this library staff have been engaged in various promotional activities such as: liaising with key staff in departments, distributing flyers to academics, attending departmental meeting and placing announcements on relevant noticeboards.

In addition to these activities, Ginny (Academic Librarian) and Jenny (e-Learning Officer) have produced an excellent four minute video demonstrating some the features of the new version.

AJAX performance boosts

Just recently I have been looking at tweaks that I can make to improve the performance of CLUMP. Here are the ones that I have found make a difference.

Set up apache to use gzip to compress things before passing them to the browser. It doesn’t make much difference on the smaller XML results being, but on the large chunks of XML being returned it reduces the size quite a lot.

Here is an extract of the apache configuration file that we use to compress text, html, javascript, css and xml files before sending them.

# compress text, html, javascript, css, xml:

AddOutputFilterByType DEFLATE text/plain

AddOutputFilterByType DEFLATE text/html

AddOutputFilterByType DEFLATE text/xml

AddOutputFilterByType DEFLATE text/css

AddOutputFilterByType DEFLATE application/xml

AddOutputFilterByType DEFLATE application/xhtml+xml

AddOutputFilterByType DEFLATE application/rss+xml

AddOutputFilterByType DEFLATE application/javascript

AddOutputFilterByType DEFLATE application/x-javascriptAnother thing is if you have a lot of outstanding AJAX requests queued up and the user clicks on something which results in those requests no longer being relevant then the browser will still process those requests. Cancelling them will free up the browser to get straight on with the new AJAX requests.

This can be very important on versions 7 and below of Internet Explorer which only allow 2 concurrent connections to a server over http1.1. If the unneeded AJAX requests aren’t cancelled and just left to complete then it can take Internet Explorer a while to clear the queue out only processing 2 requests at a time.

The good news is that Internet Explorer 8 increases the concurrent number of connections to 6, assuming that you have at least a broadband connection speed, which brings it back into align with most other browsers.

Getting closer to production

Production release of LORLS6 (aka LUMP) here at Loughborough is going to happen (hopefully) in the next month or so. In anticipation of that we’ve been doing some installs and imports of the existing reading lists from LORLS5 so that the librarians can have a play with the system. Its also allowing us to squish a few bugs and develop a few last minute features. The big test of course comes when the users start to hit the system.

Searching now added to CLUMP

Last thing I was working on before Christmas was adding searching into CLUMP. CLUMP’s search function uses LUMP’s FindSuid API to find a list of Module structural units which contain the search term in the selected data element (at the minute the data elements supported are Module Title, Module Code and Academic’s name).

There are two reasons that CLUMP searches for Modules rather than reading lists. The main reason being, if a module has multiple reading lists it is better to take the user to the module to see all the related reading lists.

The second reason is that all the current data elements that can be searched are related to the module structural units and not the reading list structural units, and it would be a bit convoluted to get a list module structural units and then look up the reading lists for each one.

Christmas comes early

With the snow and ice still covering jolly old Loughborough our thoughts naturally turn to Christmas, or more specifically Christmas presents. Will we get that present we really want or will it be socks yet again! So as you don’t end up with just a pair of socks we thought we’d put a little extra in your stocking: the alpha release of LORLS 6.

Please feel free to download and install this first release of LORLS 6 and let us know what you think of it. However it does come with the following health warning: “This is an alpha release – it’s absolutely NOT recommended for production usage.”

Perl ZOOM issues

We’re getting to the point at Loughborough where we’re considering “going live” early next year with LUMP, replacing the existing LORLSv5 install that we have as our current production reading list system. As such, we’ve just spun up a new virtual server today, to do a test LUMP install on it. This machine has a fresh CentOS installation on it, so needs all the Perl modules loaded. As we use Net::Z3950::ZOOM now, this was one of the modules installed (along with a current YAZ tool chain).

Once we’d got the basic LUMP/CLUMP code base installed on the machine I grabbed the existing LORLS database from the machine it resides on, plus the /usr/local/ReadingLists directory from the LORLSv5 install on there, in order to run the create_structures LUMP importer script. Which then barfed, complaining that “Net::Z3950::RecordSyntax::USMARC” was a bareword that couldn’t be used with strict subs (LUMP, and LORLS before it, makes use of Perl’s “use strict” feature to sanity check code).

Hmm… odd – this problem hadn’t arisen before, and indeed the error appeared to be in the old LORLSv5 ReadingListsItem.pm module, not any of the LUMP code. A bit of delving into the modules eventually turned up the solution: the new Net::Z3950::ZOOM doesn’t do backward compatibility too well. There was a load of code in /usr/lib64/perl5/site_perl/5.8.8/x86_64-linux-thread-multi/Net/Z3950.pm that appeared to implement the old pre-ZOOM Net::Z3950 subroutines, but it was all commented out. I realised that we’d not had this issue before because I’d run the importer on machines that already had LORLSv5 with an older copy of Net::Z3950 on them.

The “solution” was simply to uncomment all the sub routines under the Net::Z3950::RecordSyntax package. The create_structures script doesn’t actually use any of the LORLSv5 Z3950 stuff anyway, so its not an issue – we just need the old LORLSv5 modules to install so that we can use them to access material in the old database. I guess this goes to show the problems you can accrue when you reuse a namespace for a non-backwardly compatible API.

Adding and removing group members

Jason and I have been batting code back and forth for the last couple of weeks to provide an API and CLUMP interface to adding and removing people from usergroups. We’ve gone through several iterations of designs and implementations and now have something that seems to be working OK on our development installation – hopefully soon to appear on the demo sandbox (unless Gary decides that he wants it to work in a different way! 😉 ).

With that more or less done, and some POD documentation tweaks (‘cos I’d been doing a bit too much cutting and pasting between scripts!), I can now go back to dealing with some of the backend management scripts. The first two of these will be a simple link checker and a cron-able script to (re-)populate the “held by library” information. Gary has a couple more reports that Library staff have asked for, but they might just require a couple of APIs to be produced to allow existing reporting systems to work (they weren’t part of the distributed LORLS code base – just local Loughborough reports).

Gary has presented the new system to several groups recently with mostly positive feedback. We’ve just installed a link to this blog (and thus the demo sandbox system) into the live LORLS installation’s managelist script at Loughborough so that more academics will get a heads-up that something new is around the corner.